Compound Unique Indexes in Databases

- Rohit Sharma

- Sep 6, 2021

- 3 min read

Updated: Sep 18, 2021

Hello guys we are going to publish some technical knowledge related to backend engineering.

We are also awaiting your response so that we can improve our writing skills!

So today’s topic is

COMPOUND UNIQUE INDEXES IN THE DATABASE

As you know, we need a database to store data, but one of the most important properties is integrity, so how are we going to maintain it?

The most simple answer is adding checks!

So we are going to discuss here one of those checks in optimize way.

So firstly discuss the keyword which we gonna discuss “compound unique indexes”.So let’s quickly break down the keywords.

Compound simply means a combination of two or more things.

So let’s quickly discuss what is indexes?

Indexing is a way to optimize the performance of a database by minimizing the number of disk accesses required when a query is processed.

And unique means things that don’t repeat.

So now we are familiar with the terminology let’s jump on to the topic!

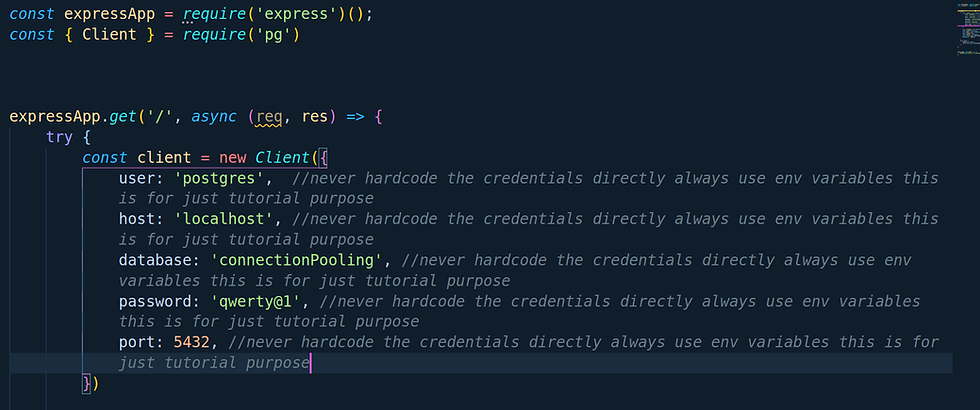

So suppose there you need to create a module of followers so basic database structure will be

users_following

id(primary key) | followed_to_user_id(FK) | followed_by_user_id(FK)

(Note:- This is a basic structure I m using you can use different ways also like you can use JSONB or JSON column too)

So our when suppose user A (id:112) is following to user B(id:114) so after following the record will be looks like this

Insert into users_following(followed_to_user_id,followed_by_user_id) values (112,114);

users_following

id(primary key) | followed_to_user_id(FK) | followed_by_user_id(FK)

1 | 112 | 114

So suppose somehow from the API there is a request for the user The following user B but if we see our use case that one user can follow another user one time only not more than one.

So how are we gonna maintain this integrity?

So our naive approach will be first run a query and check if the requested data already exists in the table or not

SELECT * FROM users_following WHERE followed_to_user_id=112 AND followed_by_user_id=114;

And if no record is found then we will insert it but if we see it here we need to run the first query to execute another query.

By this, we can definitely maintain the integrity but its, not the optimized way So in this case, we can take help compound unique indexes.

We can create an index on the dedicated table that the combination of two values can never be the same in the entire table. So to create an index we will write this syntax

CREATE UNIQUE INDEX users_following_idx ON users_following(followed_to_user_id,followed_by_user_id);

The above looks Lil bit tricky but believe me it’s an easy one let me break down the above syntax.

The users_following_idx is just a meaningful index name you can put any logical name like csworld_index anything which helps you.

users_following(followed_to_user_id,followed_by_user_id);

In the above statement, the users_following is the table name, and (followed_to_user_id,followed_by_user_id) is the attributes of the table on which you want to unique properly it can be at least one at most N attributes it can be one, two, or more than four depends on your business requirement and use case.

So after applying the index when you are going to insert the duplicate record like in our case we user A is going to again follow user B. so when you run the insert command

Insert into users_following(followed_to_user_id,followed_by_user_id) values (112,114);

You gonna get an error from the database that duplicates records on the following index.

Boom! Here we go in this we don’t need to write any select or find query to check if the requested records exist or not in the table you just need to run insert query rest leave to the database.

So this is the pros we talked about so about cons as we know “every good thing comes with good price”. So cons are the ones which are bringing pros. Didn’t get it?

Let me help you here, as we know DB basic operations are CRUD

C - Create

R- Read

U- Update

D- Delete

So when we create an index on the database our read operation becomes fasts but our other operation that is created, update and delete becomes slow because every time a row gets affected from these three the database rebuild the indexes, and rebuilding indexes takes time so the read operation gonna be fast and rest update, delete and create operation gonna becomes slow. So how are we gonna overcome this issue? We can do this by using the replication method. We don’t gonna talk about replication in this blog if you need a blog on the same let us know in the comment box.

So that’s all in this blog this is our first please let us know your feedback in the comment section or any topic on which you need a blog.

Stay Tuned and keep learning!

Comments